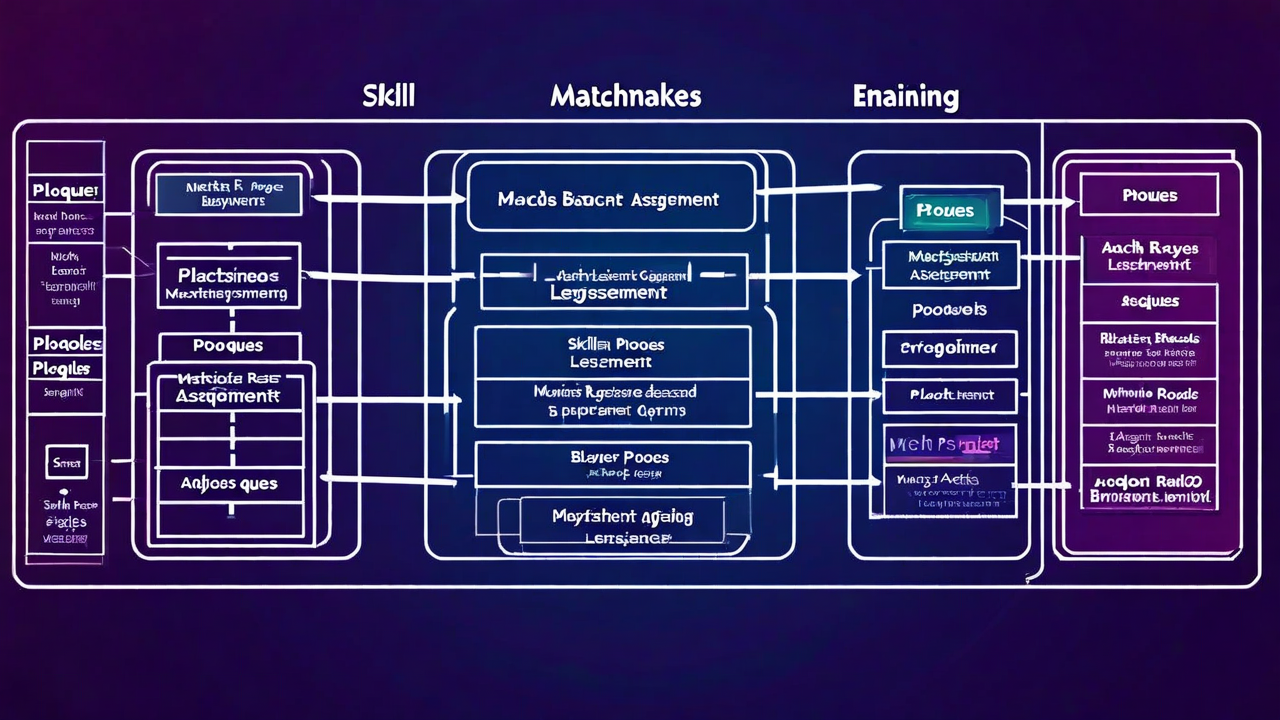

Small-scale matchmaking is straightforward. Find players with similar skill ratings, put them in the same game, done. It works fine at 10,000 concurrent players. At 50 million monthly actives — which works out to roughly 200,000–400,000 concurrent depending on timezone distributions — the entire problem changes shape.

This post covers what we learned running matchmaking infrastructure at that scale. Some of it is counterintuitive. Most of it was expensive to figure out.

The pool fragmentation problem hits sooner than you expect

At any given moment, your matchmaking pool is segmented by region, skill bracket, game mode, platform (if you support cross-play), and whatever other filters your game applies. Each additional filter axis multiplies the fragmentation.

50M monthly players sounds like an enormous pool. But after you filter for NA-West, competitive mode, Platinum-Diamond bracket, console-only, and solo queue — you might have 800 players available at 2pm on a Tuesday. That's barely enough to fill 40 games of 5v5 simultaneously.

The naive fix is to expand match criteria when pool depth drops. That works until you have two pools expanding simultaneously and they start poaching players from each other. We saw this create oscillation artifacts where queue times in adjacent brackets would spike and crash in 90-second cycles as players shuffled between them.

The solution that actually worked: tiered expansion with explicit pool ownership. Each pool expands on its own schedule. When expansion boundaries overlap, there's a negotiation layer that allocates players to the earliest-queued match. No more oscillation, stable queue times across all brackets.

Skill rating drift during peak hours

Match quality degrades predictably during peak concurrency windows. More players means faster queue drain, which means your matchmaker is burning through calibrated matches faster than post-game rating updates can stabilize the pool. The effective skill window for "good" matches widens as the system tries to keep queue times acceptable.

At 400,000 concurrent, we measured a 40% increase in average skill delta between opponents during the 8–10pm EST peak compared to 2–4am low traffic. Players feel this. They don't know why games feel less competitive on Friday nights — they just stop queuing.

The fix requires decoupling queue time SLA from match quality SLA. Define acceptable match quality floors (max skill delta, min team balance score) as hard constraints, not soft preferences. Let queue times extend when pool conditions can't meet those floors. Display honest wait time estimates. Players tolerate 90-second queues when they understand the tradeoff. They don't tolerate bad matches at any queue time.

Regional routing is more complex than latency

The obvious approach: assign players to the nearest region, match within that region. Simple, low latency. Works great until a mid-sized region has 1,200 players at 11pm local time split across 8 skill brackets and 4 game modes. You're looking at 37 players per pool on average. Queue times go to 4+ minutes.

Cross-region matching requires latency budgeting. You need a real model of acceptable latency combinations. Two players at 28ms to a central server both feel fine. One player at 18ms and one at 52ms is already pushing the boundary for competitive play. Mixing a 14ms and a 71ms player in the same game is genuinely unfair.

We built a latency compatibility graph that pre-calculates acceptable cross-region pairings for each server location. The matchmaker queries this graph to determine which players can be pooled without latency imbalance exceeding thresholds. At 14 regions, this is roughly 91 cross-region pairs to evaluate — manageable as a precomputed lookup.

The party problem

Grouped players break every assumption your solo-queue matchmaker made. A party of 3 with mixed skill ratings creates a weighted average that doesn't correspond to any real player. A Diamond-Platinum-Gold stack has average rating in low Diamond, but the skill variance within their team is enormous.

Worse: parties preferentially queue into modes that disadvantage the opposing team. If your matchmaker gives a 5-stack the same treatment as 5 solo players of similar average rating, the 5-stack will win that match significantly more often. Players notice. The rated mode fills with parties and solo players stop queueing.

The practical solution is party-aware matching pools. Solo queue is separate. Parties of 2–5 have their own pools with adjusted skill weighting that accounts for team coordination bonus. For full 5-stacks, the coordination bonus can be as high as +120 effective rating points depending on game type. This is estimated from historical win rate data, not intuition.

Session server allocation is downstream of matchmaking

Most teams treat matchmaking and server allocation as separate systems that communicate at match completion. Matchmaker finds a match, publishes an event, allocator spins up or claims a server, players connect. That pipeline works at small scale with generous timeouts.

At high concurrent volume, allocation latency becomes visible. If it takes 3–8 seconds to allocate a server after match formation, players see that as post-queue wait time. They drop. We measured 12% match abandonment during the allocation window in one deployment — players who completed matchmaking but left before connecting to the server.

The answer is speculative pre-allocation. The matchmaker signals allocation intent as soon as it starts forming a match — before it's confirmed. Most of those speculative allocations convert to real matches. The ones that don't result in a warm server that gets returned to the pool. The cost is maybe 8% wasted allocations. The win is sub-second server assignment and near-zero post-queue abandonment.

What actually matters at scale

Queue time and match quality aren't one tradeoff — they're two separate systems that need independent tuning. Get your pool depth monitoring right. Build the party-aware pools before you need them. Speculative pre-allocation pays for itself in retention numbers alone. And be honest with players about wait times. They'll accept a long queue. They won't accept a bad match after a short one.

Matchmaking infrastructure that scales with your player count

GameStack's matchmaking layer handles pool management, cross-region routing, and server pre-allocation. Start with what you need and scale from there.

See how it works